From Rust Source Code to Mathematical Proof: How We Verify Cryptographic and Safety-Critical Rust

How We Got Here

Our verification pipeline started as a collaboration with the Ethereum Foundation. The zkEVM Verification Project brought together teams working on machine-checked proofs for zero-knowledge virtual machines. Our task was concrete: build a pipeline that translates Rust cryptographic libraries (Plonky3, RISC Zero, and others) into Lean 4 using hax and aeneas, connect them to formal specification libraries (ArkLib and CompPoly), and prove that the production Rust code matches the abstract mathematical specifications.

The work is consensus-critical: for example a bug in a single FRI folding step silently invalidates every proof a zkVM produces.

The same pattern reaches beyond zero-knowledge proofs. The Beneficial AI Foundation's Signal Shot programme is funding a machine-checked proof of the Signal messaging stack in Lean 4. We are planning to take part.

Why now? AI-assisted attackers have raised the bar. They can iterate exploit attempts faster than humans can review code, and "we tested a lot" has stopped being a credible defence. Testing only finds the bugs you looked for. Formal verification produces a mathematical proof, checkable by a machine, that no bug of a given class exists for any input.

The Pipeline

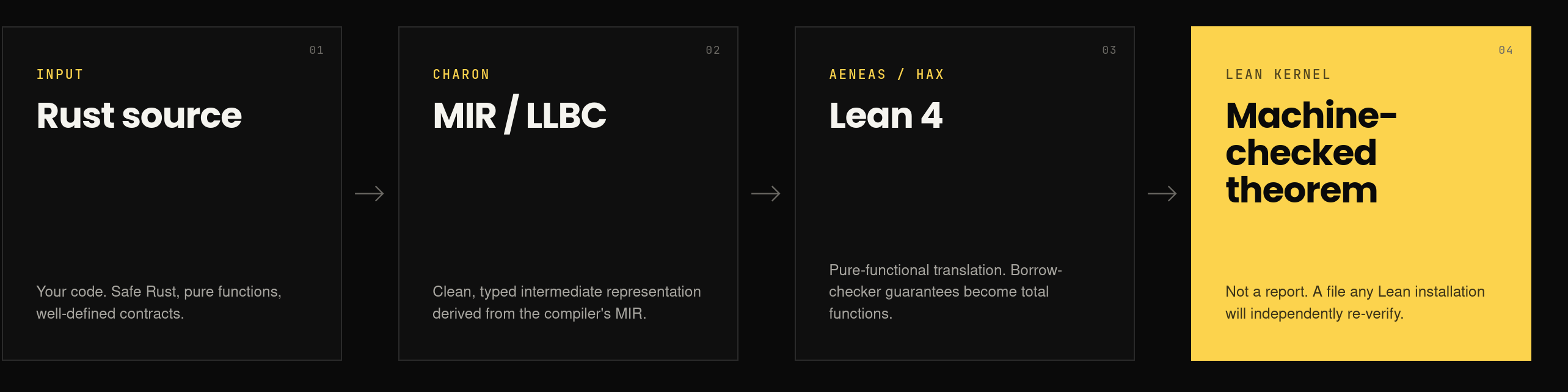

Rust source ──────▸ MIR / HIR ──────▸ Lean 4 ──────▸ Machine-checked

(your code) (Charon / Hax) (extracted) theorems

Three mature, open-source tools carry the weight:

-

Charon lowers Rust programs to a clean, typed intermediate representation (LLBC) derived from the compiler's MIR. LLBC is small enough to reason about and close enough to Rust that the extracted code is recognisably the same function.

-

Aeneas translates LLBC to pure-functional Lean 4. It uses Rust's borrow-checker guarantees to model every function as a pure Lean function. The result is wrapped in a

Resultmonad: success, panic, or non-termination. We do not prove memory safety. Rust already did that. -

Hax is an annotation-driven alternative that targets Lean 4 (and F*). We use Hax for Rust code that uses patterns Aeneas does not yet support, such as some dynamic-dispatch shapes.

The output is a Lean 4 file backed by Mathlib4, the standard mathematical library. Once the Rust code is in Lean, we write a formal specification of what it should do and prove that the extracted function matches it. Any Lean 4 installation will independently re-verify the result; a proof accepted by the Lean kernel is a proof, full stop.

Our Verification Work So Far

As participants in the zkEVM Verification Project, funded and coordinated by the Ethereum Foundation, we have applied this pipeline to a range of cryptographic and consensus-critical primitives.

| Target | Pipeline | What we proved |

|---|---|---|

| Plonky3 FRI round-scheduling | Aeneas | 6 theorems (bounds, monotonicity, boundary conditions), 0 sorry |

| Plonky3 arity-2 FRI fold | Aeneas + ArkLib | Rust implementation matches the abstract polynomial fold, no overflow in 13 checked operations, connection to formal field-theoretic specification |

| Plonky3 Mersenne31 field arithmetic | Aeneas | Verification of addition and multiplication for the Rust model of Plonky3's Mersenne31 prime field |

| Plonky3 Mersenne31 / KoalaBear fields | Hax | Lean 4 extraction of Rust models of Plonky3's Mersenne31 and KoalaBear field implementations |

| Plonky3 Horner polynomial evaluation | Hax + CompPoly | Evaluation correctness against formal polynomial specification |

| Plonky3 one-step FRI folding | Hax | End-to-end FRI round correctness |

| RISC Zero Merkle inclusion | Hax | Root recomputation and inclusion-proof verification |

| 32-bit ADC (addition with carry) | Hax + bv_decide | Bit-level correctness via automated bit-vector reasoning |

Some of these verifications connect to ArkLib, a formal cryptographic specification library in Lean 4 that defines polynomial folding, evaluation domains, Reed-Solomon codes, and the FRI protocol at the abstract mathematical level. This connects the implementation proof to the abstract cryptographic specification — what security audits should deliver, but most still cannot.

Interesting case is the FRI folding step, where Aeneas-extracted code is connected directly to ArkLib's polynomial fold — see aeneas-FRI/fold_step_lean (alongside the round-scheduling logic in aeneas-FRI/fold_arity_lean).

Building the Pipeline Was Not Trivial

A pipeline is only as strong as its weakest connection. In practice we ran into two classes of friction.

Lean 4 version drift

Aeneas, Hax, ArkLib, CompPoly, and Mathlib4 all evolve on independent Lean 4 release tracks. Early in the project we found that we could not build a single Lake project that imported tools from multiple sources at once: each was pinned to a different Lean toolchain. We raised the issue in the affected repositories, the maintainers coordinated across teams, and after a few rounds of discussion the libraries were aligned on a common Lean 4 version. This kind of cross-repository version coordination is invisible from the outside, but it is the difference between a working pipeline and three weeks of wasted effort.

Aeneas/Hax extraction gaps

It is important to mention: tools are evolving rapidly. At the time this work was conducted, we encountered some gaps in compilation/translation. However, by the time you're reading this blog post, some of the mentioned gaps may have already been addressed.

Aeneas and Hax extract safe Rust to pure Lean, but their supported subset is real and has hard edges. We hit several:

- A name collision (

BitVec.toNat_pow) between Aeneas's bit-vector lemmas and Batteries/mathlib prevented importing both in a single Lean file. We patched it upstream. - Casts to wider integer types (

u128) in modular arithmetic required careful proof engineering. - Generic functions with trait bounds (e.g.

F: Field + TwoAdicField) cannot be extracted directly: Aeneas needs concrete monomorphized types. - External-crate calls (e.g.

byteorder,std::io::Read/Write) are not extractable, because Charon does not see the MIR of crates beyond the workspace. whileloops are extracted as recursive functions and require explicit termination measures.

How we work around these gaps

We developed three reliable strategies:

-

Fix it upstream. When the gap was a missing feature or lemma we could supply, we did. Our merged contributions to Aeneas, Hax, and CompPoly all came out of friction we hit during real verification work.

-

Extract a model. When the production Rust code used patterns Aeneas could not handle (parallel iterators, deep generics,

byteordercalls), we rewrote the mathematical core of the function as a standalone, non-generic Rust crate. The resulting model is semantically equivalent to the production code, extracts cleanly, and can be verified end-to-end. We used this strategy for Plonky3'sfold_matrix(rewritten asfold_step); we would use it for any I/O-bound serialization code where the production version touchesstd::io. -

Layered tools for

unsafecode. The pipeline as described handles safe Rust. For codebases withunsafeblocks (memory-mapped buffers, transmutation tricks, manual lifetime management), the verification story is layered: prove the safe core with Aeneas/Hax, and bring in complementary tools for theunsafeparts. Two emerging tools we are watching closely are:- cargo-anneal (Google / zerocopy team), which extends Aeneas with the ability to write specifications and soundness proofs for

unsafeRust directly in doc comments. - anodized, another emerging tool in the same space.

Our hands-on experience is currently with safe code. For

unsafe-heavy codebases (such as parts of the Signal stack), we propose evaluating these layered tools as part of an initial scoping engagement, rather than promising blanket coverage from day one. - cargo-anneal (Google / zerocopy team), which extends Aeneas with the ability to write specifications and soundness proofs for

We Contribute to the Tools We Use

Formal verification is a systems problem. The prover is only as good as the toolchain beneath it: the extractor, the math library, the spec library. We are active upstream contributors to Aeneas and Hax, and we participate in expanding the range of Rust patterns these tools can extract.

We also contribute to the Lean 4 specification libraries we depend on, in particular CompPoly, which provides formal polynomial and finite-field theory underpinning ArkLib's FRI specifications. Our earlier contributions to CompPoly include complete multilinear transform functions and several error-correcting interpolation algorithms. Our current work focuses on multivariate exponentiation, serialization, and FFT-based interpolation — extensions that directly enable the next layer of cryptographic verification on top of CompPoly.

When we engage with a client, issues we discover during the work get fixed at the root of the toolchain, not patched over inside a single project. This compounds across engagements: every pilot makes the next one faster and cheaper.

What the Pipeline Is Good For

The best candidates for Aeneas and Hax extraction are pure, safe Rust functions with well-defined input–output contracts. Concrete examples we can verify today:

- Serialization and parsing: round-trip correctness, canonicity (rejection of non-minimal encodings), panic-freedom on malformed input, bounded read lengths. Consensus-critical in any Bitcoin-derived protocol, any transaction format, any RPC deserializer.

- Cryptographic primitives: modular arithmetic (multiplication, exponentiation, Barrett reduction), hash function cores, signature validation rules, commitment schemes, Merkle inclusion.

- Data structures: bitsets, sparse arrays, hash maps, tree-shaped containers where a subtle bug silently corrupts authorization or consensus decisions.

- Protocol logic: state machines, access-control predicates, consensus rules, packet filters, validation rules that must agree byte-for-byte across heterogeneous implementations.

- Arithmetic kernels: monetary amounts (overflow-free addition, conservation), fixed-point math, accumulators over bounded domains.

In practice, the right engagement model is to identify the critical core of a codebase (the functions whose correctness determines whether the whole system is sound) and verify those, leaving the integration layer to conventional testing. Heavily generic code can be handled but requires careful scoping.

What an Engagement Looks Like

A typical pilot runs four to eight weeks with two verification engineers, and produces self-contained deliverables:

- A standalone Rust extraction target: a subset of your codebase, isolated for verification.

- The Lean 4 translation produced by Aeneas or Hax.

- A formal specification of the correctness properties, reviewed with your team for semantic accuracy.

- Machine-checked proofs accepted by the Lean 4 kernel, with no

sorryand no unproved axioms. - A public or private repository with continuous integration that re-runs the proofs on every commit. Anyone, including your future auditors, can independently re-verify the work.

- A final report documenting scope, methodology, and any limitations encountered.

The first engagement typically targets a single function or small module, the kind of code where a hidden bug is most expensive to discover later. Follow-ups scale to full modules, protocol layers, or multi-year verification roadmaps.

Who This Is For

If you are building a cryptographic library, a cryptocurrency protocol, privacy infrastructure, safety-critical embedded software, or a regulated product that will face a rigorous audit, formal verification is no longer a luxury. It is the only technique that produces machine-checkable evidence of correctness — evidence that scales to all inputs and all attackers, and survives team turnover.

We have applied this pipeline to cryptographic primitives in Plonky3 and RISC Zero. The same pipeline applies to your Rust codebase.

Our Public Work

If you maintain a piece of Rust that is too important to be wrong, we would like to hear about it.

- The zkEVM Verification Project (this work, plus earlier Aeneas-based contributions): github.com/Verified-zkEVM/rust-lean

- Earlier formal verification work, including Hax-based contributions for the Ethereum Foundation: github.com/runtimeverification/publications#zkvm-formal-verification